The words of the corpus were then annotated with part-of-speech labels, using a combination of automated labeling and laborious hand correction. It includes 500 samples of English-language text, totaling roughly one million words, compiled from works published in the United States in 1961. This collection is known as the Brown Corpus. In the 1960’s, linguists from Brown University created the first “large” collection of text. Early on in the advent of computers, some researchers surmised the importance of collecting unsolicited examples of naturally occurring text and performing quantitative analyses to inform our understanding.

Today, best practice for many subtasks of natural language processing involves working with large collections of text, each of which comprises “a corpus”. 3.1 The Role of Corpora in Understanding Syntax

Moreover, both of these physiological approaches rely on experts to hypothesize about the structure of language, conduct experiments to elicit human behavior, and then generalize from a relatively small set of observations. Although such physiological evidence is less subject to bias, the cost of the equipment and the difficulty of using it has limited the scale of such studies. As an alternative, there have been attempts to use evidence of language structure obtained directly from physical monitoring of people’s eyes (via eye tracking) or brains (via event-related potentials measured by electroencephalograms) while they process language. There is always some risk of experimental bias when we depend on the judgements of untrained native speakers to define the legal structures of a language. The fact that we can substitute the verb “tolerate” for “deal with” is evidence that “deal with” is a single entity. For example, in the sentence “I would rather deal with the side effects of my medication”, one might wonder if “with” is part of a complex verb “deal with” or it acts as a preposition, which is a function word more associated with the noun phrase “the side effects”. This technique is still useful today as a means of verifying the syntactic labels of rarely seen expressions. What forms a legal constituent in a given language was once determined qualitatively and empirically: early linguists would review written documents, or interview native speakers of language, to find out what phrases native speakers find acceptable and what phrases or words can be substituted for one another and still be considered grammatical. The conventions for describing syntax arise from two disciplines: studies by linguists, going back as far as 8th century BCE by the first Sanskrit grammarians, and work by computational scientists, who have standardized and revised the labeling of syntactic units to better meet the needs of automated processing. Most current NLP work uses around 35 labels for different parts of speech, with additional labels for punctuation. By contrast, the first published guideline for annotating part of speech created by linguists used eighty different categories. The folks who watched Schoolhouse Rock also were taught there were eight, but a slightly different set.

If one were to ask how many categories of words are there, the writing center at a university might say there are eight different types of parts of speech in the English language: noun, pronoun, verb, adjective, adverb, preposition, conjunction, and interjection. One of the challenges faced in NLP work is that terminology for describing syntax has evolved over time and differs somewhat across disciplines. The categories of words used for NLP are mostly similar to those used in other contexts, but in some cases they may be different from how you were taught when you were learning the grammar of English.

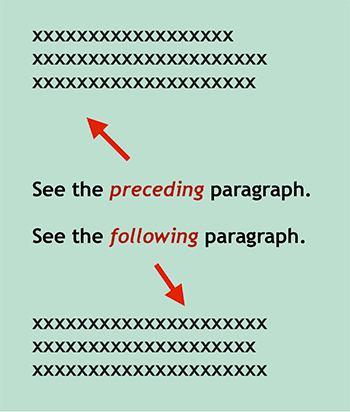

Sentences can be combined into compound sentences using conjunctions, such as “and”. The structures include words (which are the smallest well-formed units), phrases (which are legal sequences of words), clauses and sentences (which are both legal sequences of phrases). To keep the set conventions manageable, and to reflect how native speakers use their language, syntax is defined hierarchically and recursively. For example, in English, the sequence “cat the mat on” is not well-formed. Syntax is the set of conventions of language that specify whether a given sequence of words is well-formed and what functional relations, if any, pertain to them. The order in which words and phrases occur matters.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed